Agentic Layer

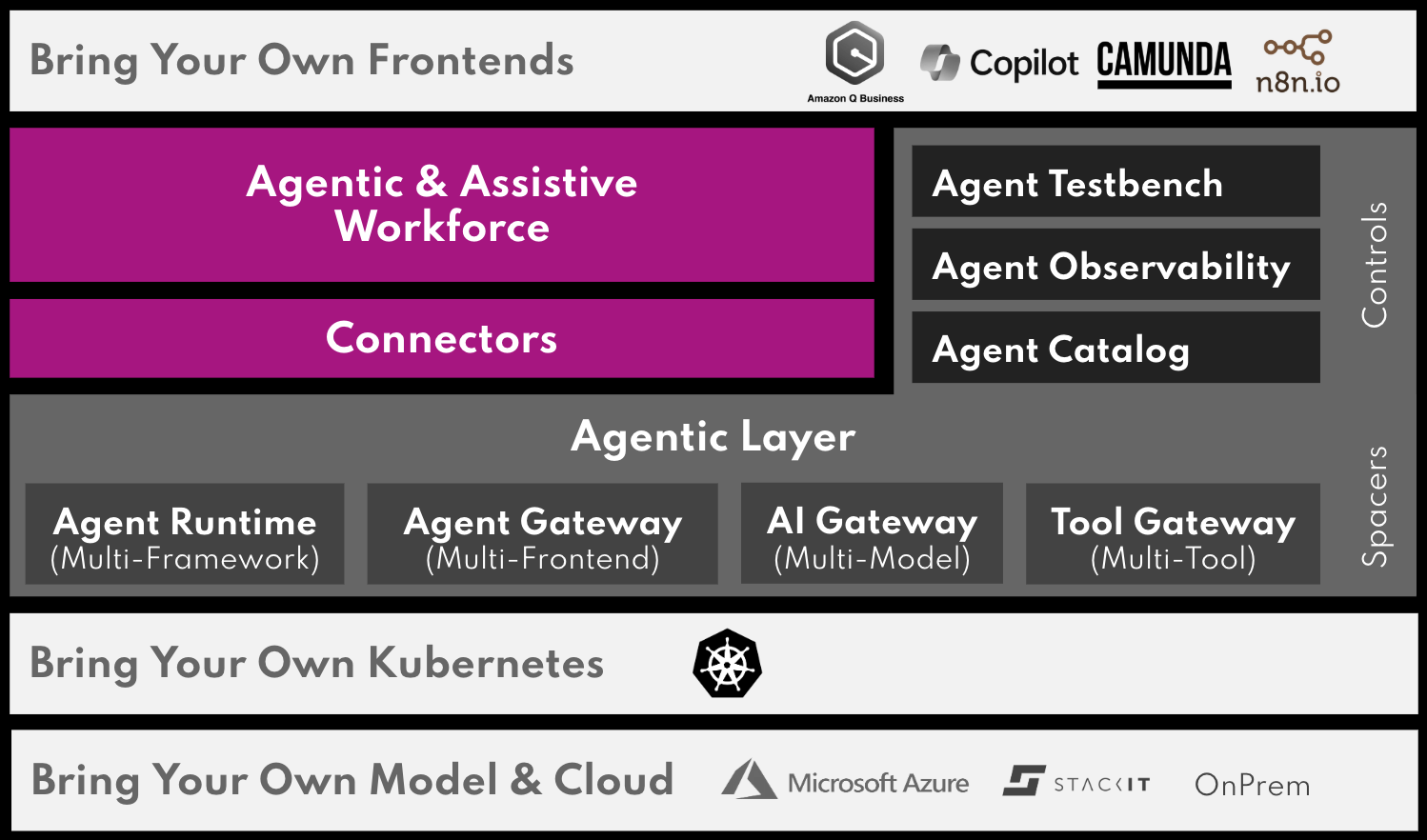

The Agentic Layer is an open-source, Kubernetes-native collection of building blocks for orchestrating multi-agent AI systems in a flexible, secure, and maintainable way. It acts as an abstraction layer and compliance guardian between your business applications and the fast-moving world of AI agents, models, and protocols.

For the goals, context, and design rationale behind the layer, see the architecture documentation.

Where to start

I’m installing the layer

Read Install the Agentic Layer for the end-to-end install order, then follow the per-component install how-to guides linked from there.

I’m building agents

Walk through the News Showcase tutorial to deploy your first multi-agent system, then explore the agent how-tos for goal-shaped guidance on templates, custom images, sub-agents, and workforces.

I’m evaluating the layer

Start with the architecture introduction and the building-block view for the design rationale and component roles.

I’m contributing

Each component lives in its own repository under github.com/agentic-layer. The CRD contracts in the Agent Runtime Operator and the existing implementation operators are the entry points for adding new gateway or runtime implementations.

About this site

The documentation follows the Diátaxis framework — see How this site is organized for the categories and where each component’s content lives.